Background

A while ago, I read a message on one of the ASP (Association of Shareware Professionals) private newsgroups announcing a new "Special of the Day" type website. It was to be called Bits du Jour - BDJ for short. BDJ would work along similar lines to Woot!, but would only feature software products. I thought “Wow, great idea... that's a fantastic way to promote a software product.”

Of course, the software vendor has to discount the product and provide a cut for BDJ, so the total revenue for each license would be much smaller than usual, but there is potential to reach a new crowd of people. It provides a way to promote the product at a lower price point, as a loss leader.

The Wait

I contacted Ellen Craw from BDJ and we exchanged a few emails. That was back in March 2006, and Automise 1.0 was still a month away from release. When you release a brand new product, how do you get the word out? Bits du Jour sounded like a great way to make a splash and build up a user base.

Automise retails for $195 US, and Ellen suggested that products priced over $20 don't sell so well on BDJ. It's all about impulse buys, and it sounds like twenty bucks is about the threshold. At the time we thought that $20 was just too cheap for a $200 product… no sale!

Time passed. Automise generated some sales and some interest, including some positive reviews, but we still didn’t have the widespread market penetration that we wanted.

So, in early November 2006, I thought we'd approach BDJ again. 90% discount off any product is sure to generate a lot of new sales. We also figured that BDJ should have gained in popularity since it's launch earlier in the year, and there should be a reasonable number of people now subscribed to it's RSS feeds and emails.

[As a side note, I bought Bar Genie through BDJ a few months back... it was a good deal and a nice reference for mixing cocktails!]

Preparation

We set a date - Wednesday 29th November 2006. Nothing special about it, but we chose a day in the middle of the week so that it wouldn’t overlap with a weekend or public holiday in any timezone.

Recently, Paul has done a considerable amount of work on our website store. This made it easy for us to set up a new coupon code that would last 24 hours. A few simple tests and we were ready.

Ellen drafted the BDJ article on Automise, and she also ran through the whole process to verify that everything held together.

D-Day

The special went live at midnight in the US (Central Standard Time). It was 5 in the afternoon here. I checked the BDJ site and Automise was on the home page. 90% discount was highlighted with red stars on either side - there's no question that this was a big discount. :)

I sent out some emails to some of our existing customers, users that we maintain a good relationship with. The email notified them of the special on BDJ for Automise, and encouraged them to spread the news. :)

The first sale came through 17 minutes after the special went live. Bloody Great! :)

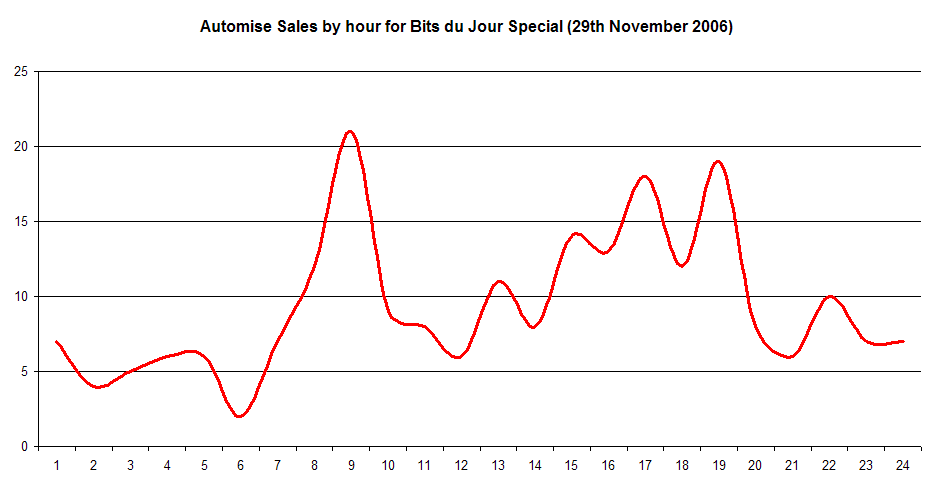

The next sale came in at 22 minutes past, then another at 31, and another at 38. “Wow... this is awesome.” In the first hour we made seven sales. Seven new customers. Excellent!

Then it went a bit quiet. Over the next six or seven hours there were between about two and six sales an hour. Still great though, and by this time it was late evening in Australia.

Now, I want to give you some background on our order process. To place an order for Automise or FinalBuilder, you need to be a registered user on our website. New registrations need to have their email addresses confirmed (via a link in an email.) After you’re registered, you can add items to your shopping cart and go through the checkout process. During checkout, there is a coupon field. This is where the BitsDuJour coupon needed to be added in order to grant the 90% discount.

After checkout, our store redirects to the WorldPay payment gateway. WorldPay handles all of the credit card processing. We receive a Web Services callback with the status of the order, and send an automated email to the customer. The order then goes into a “pending” state so that we can review the order (eg. to check for fraud) and then we click a button to process it. FinalBuilder and Automise aren't high turnover products, so this process works great and we catch 99.9% of fraud before the license key is sent out.

Anyhow...I woke up the next morning and checked the pending orders. “Wow...” over 80 orders had come in during the night! Now someone had to process them. And guess what, that someone was me… It was a case of mixed emotions: heaps of sales, but a boring job manually checking and processing each one!

Sales continued to come in for the rest of the day. All up, the final count was 226 sales. We were all stoked, and a bit tired too. The sale had generated quite a bit of extra support work, and also there were also some bugs that showed up in the order process.

I emailed Ellen to let her know how we went, and got the following reply:

"That's GREAT - you just broke all my records. Thank YOU!"

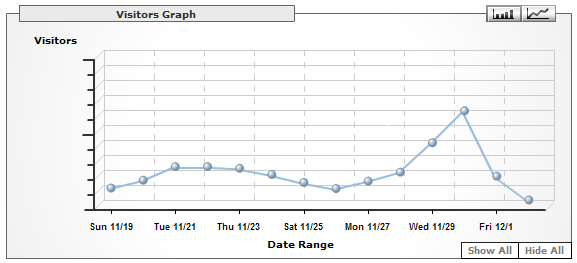

Also, traffic on the BDJ site was about 40% higher than usual. We tried to promote the special as much as we could. Some fairly high profile bloggers mentioned the deal, eg. Roy Osherove (ISerializable) and Troy Magennis (LINQed IN). I'm sure a lot of traffic came to the site because of this.

Here's a graph of our sales volume over the 24 hour period:

And here's our daily website traffic. Because of our timezone the traffic is spread over both the Wednesday and Thursday. There's a pretty obvious spike!

So, there you have it. Overall it's been a great opportunity to feature Automise on Bits du Jour. If you're thinking about featuring your product on BDJ, and the software has a fairly general audience, then I reckon you should go for it! Thanks heaps to Ellen and Bits du Jour, the people who helped us promote the special, and especially to all our new customers. :)

Problems

Over the course of the sale, we experience a few minor problems that we hadn’t noticed before.

1. One of the main problems was that the store would allow you to enter the coupon code (and validate it) with an extra space character "BitsDuJour ". But then when the Process Order button was pressed, the coupon would be rejected. Many people reported that they couldn't get the coupon code to work, and it wasn't until about 18 hours into the special that we figured out why and fixed the bug. We lost sales because of this - people emailed us and told us so.

2. Another weird problem was that some people (normally from Germany) had problems with the store redirected to WorldPay. This was caused by the regional settings using a comma in the total amount instead of a decimal point. We fixed the bug but also had to process some of these orders manually before we had figured out the reason why.

3. We accidentally had heaps of references to FinalBuilder in the automated emails sent out for the store. This was more of an embarrassment than a problem, and we fixed it during the day as well.

What we've learnt

1. Bits du Jour was great idea. The customer base for Automise has grown, and there are a lot of happy people. The price was low enough to create impulse purchases, and will hopefully act as a loss leader to make Automise better known.

2. There were some bugs in the store that we should have known about. More testing required!

3. The order process is too difficult. A lot of people got a bit peeved at the entire process, and I'm sure some people gave up. There was a short thread on the Joel on Software forums with some criticism of the process:

https://discuss.joelonsoftware.com/default.asp?biz.5.421152.9

4. Promotion is very important. See below for mentions of the Automise special which appeared on various blogs. I posted the deal on a couple of mailing lists as well. This made a huge difference.

Links from the Blogosphere, and other coverage of the BDJ Automise special

https://wesnerm.blogs.com/net_undocumented/2006/11/components.html

"Coincidentally, his post today is offering Automise for $19 (down from $199) for 24 hours in Bits du Jour, a site, much like Woot, offering new discounted software daily."

https://aspiring-technology.com/blogs/troym/archive/2006/11/29/63.aspx

"Todays deal caught my eye - its 90% off. Automise - a general purpose automation tool (think GUI based scripting and debugging)."

https://weblogs.asp.net/rosherove/archive/2006/11/28/cool-promotion-automise-for-20-for-24h-window.aspx

"A little bird tells me that in a few hours you'll be able to get Automise (that's the system admin's version of FinalBuilder - my favorite build automation tool) over here for a 90% discount - less than $20 instead of $195 for a 24 hour window."

https://mortonfox.livejournal.com/465710.html

"Got a copy of Automise at a 90% discount, thanks to Bits du Jour. When I have more time, I'll dig deeper into the software to see if it's really that much better than AutoHotkey. I have Bits du Jour in my del.icio.us bookmarks, but I'd forgotten all about it until IanH. mentioned the deal on the Joel on Software discussion forum."

https://midspot.wordpress.com/2006/11/29/automise-at-a-great-deal/

"Automise does it for you. I downloaded the trial last night and it is pretty slick and powerful. Try it for free, and today only you can get a huge discount on the product."

Some other user comments

"Holy discount, Batman! I almost feel duty bound to buy this 'cos of the 90% off! Nice job BDJ and Automise!"

"Thank you very much for this generous rebate!"

"Very cool tool!

I have tested many automation tools and Automise is one of the best. When I saw this promotion this morning I just bought it - thank you!"

"I've heard good things about it, and at 90% off, you can't really go wrong, can you?"